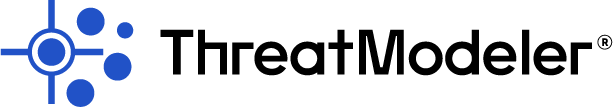

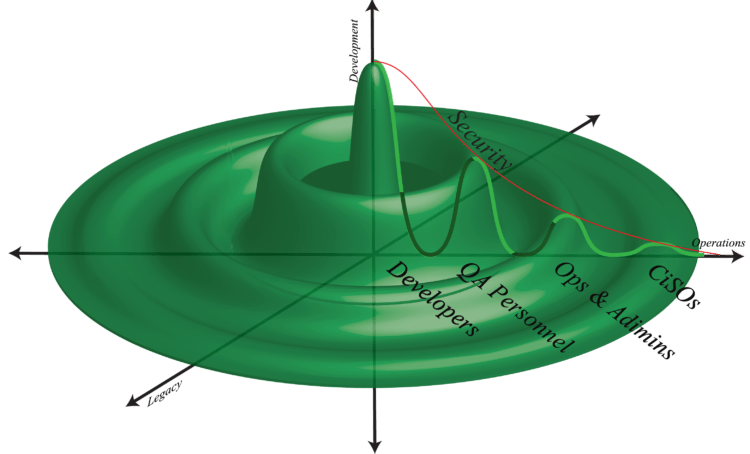

If there is such a thing as “traditional DevOps,” it is a two-dimensional beast. Consider, for example, the relatively simple two-dimensional graph shown below. Let development be represented on the vertical axis and operations be represented on the horizontal. Then stakeholder engagement with and benefit from various DevOps processes might be expressed as the area under the green line. A well-implemented DevOps culture provides engagement and benefits for all stakeholders, regardless of their function or position within the organization. Of course, that brings up the issue of how managing IT legacy systems and applications fits into the DevOps culture.

We know that, in a best-case DevOps scenario, security is integrated end-to-end. Six years of survey results gathered from more than 27,000 participants amply demonstrates that “baking in” security improves DevOps implementation and maximizes organizational benefits. [1] We can conceptually represent the end-to-end integration and automation of security with the DevOps workflow and toolsets as the red line on the above graph. The area under that line can then represent collaborative engagement between security and the DevOps / leadership personnel.

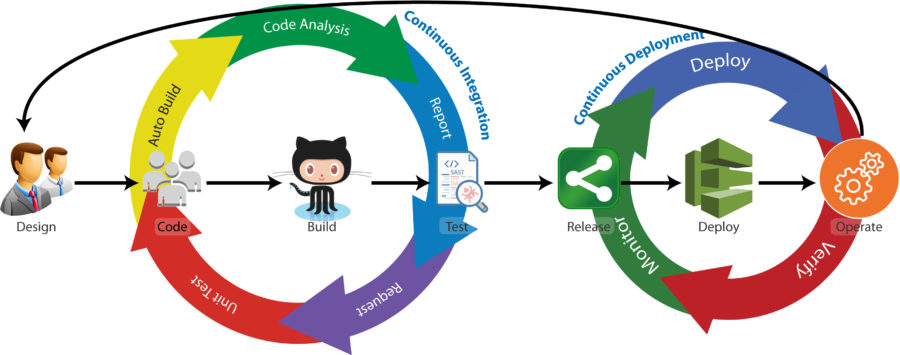

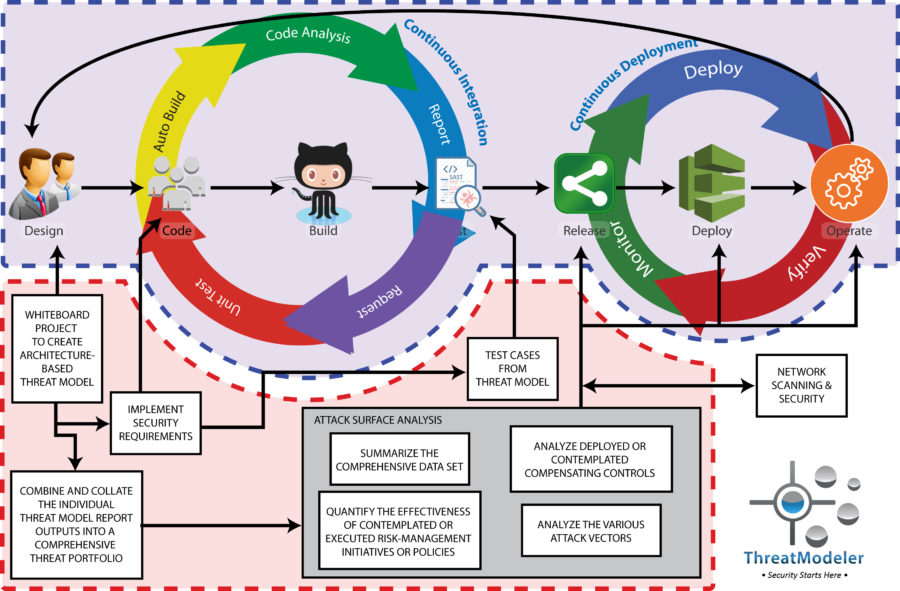

Throughout this series, we have developed the core concept that integrating security into the existing workflow and toolset produces improvements in the DevOps implementation. Improved DevOps yields a higher functioning IT ecosystem. Since every modern organization is a digital organization, improved IT ecosystems naturally result in an improved bottom line. Our series to date has considered six ways enterprise threat modeling improved DevOps implementation, including left-shifting and right-shifting security across the DevOps workflow, creating cross-functional collaboration, generating up-front QA, providing visibility for Ops, and empowering transformational leadership. In this, the final installment in the series, we show how enterprise threat modeling can be leveraged to include IT legacy systems and applications within the DevOps purview.

To Improve DevOps, Include Legacy Systems

Almost every DevOps discussion revolves around getting new projects into deployment or out to customers – invariably ignoring legacy systems. However, DevOps was never intended to be just a production methodology but rather an organizational culture change driving digital transformation. While limiting the DevOps purview to only “new” projects does demonstrate the benefits of implementation, it also cuts short realization of the full benefits. Unless your organization is a new digital unicorn, your IT environment includes IT legacy systems and applications upon which your organization’s daily operations depend.

IF DevOps is to produce culture change throughout the organization, it needs to be more than a production assembly line. Incorporating IT legacy systems within the DevOps purview is a must-have if organizations are to realize the full benefits. However, most enterprises that have tried to bring their legacy IT into the DevOps fold have realized theoretical talk is cheap. Legacy systems are far more static than anything being rolled off the CI/CD production line. Furthermore, legacy apps and systems are tightly coupled with coding blocks meant to run in batch mode – about as different to today’s cloud-based microservice architecture as possible. Trying to apply standard DevOps methodologies and toolsets out of the box to the legacy systems is sure to be an exercise in aggravation and futility. [2]

Today’s enterprise IT ecosystems are highly interconnected, both internally and externally, and are subject to a rapidly evolving threat landscape. Having separate IT silos, wither from a security perspective or a production perspective, is inefficient and short-sighted. [3] [4] Not only will the bi-directional dependencies between the legacy system and the DevOps portfolio create an insurmountable obstacle to realizing all the benefits of your DevOps implementation, but they may also increase attacker opportunities. The attacker population will not care about artificial functional silos. They will approach your IT ecosystem as an architectural whole and leverage the bi-directional process flows wherever possible.

Obviously, new deployment environments and apps need to be secured. Legacy systems and apps need to be just as secure. It is impossible, though, to truly secure one or the other if the bi-directional process flows are not also secured.

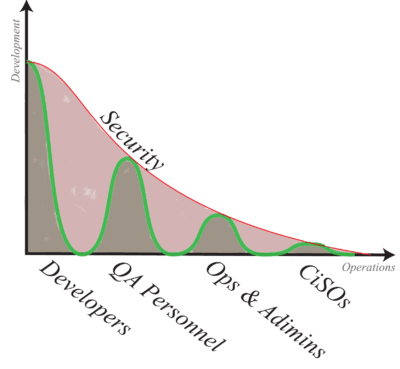

The inescapable conclusion enterprises implementing DevOps must face is that there is another dimension to consider beyond just Dev and Ops for new production. Enterprise IT systems do, and for the foreseeable future, will continue to include legacy systems upon which tightly coupled legacy applications running in batch mode operate. Thus, for enterprises whose existence predates the DevOps digital transformation, a modification to the above conceptual graph is needed. We, therefore, include legacy as a third axis to the original graph:

We now have a three-dimensional situation. The simple – and perhaps default – way to think about more complex cases is to just switch between multiple two-dimensional perspectives. However, developing a consistent solution instead requires us to include development, operations, and legacy IT simultaneously.

Enterprise DevOps: A Three-Dimensional Challenge

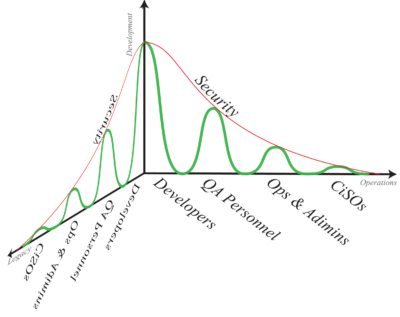

When a sophisticated analysis requires creating a 3D graph, we seldom see all the data points lining up only on two orthogonal planes as shown above. Usually, the data points also occupy the space between as well. That is why it is insufficient to deal with a three-dimensional problem by switching between multiple two-dimensional perspectives – there is just much more complexity that needs to be taken into account. Conceptually, we can illustrate the added complexity in our DevOps graph by rotating the original graph a quarter turn around the horizontal axis:

If making sense of the new graph is proving difficult, be comforted – you are in plentiful company. Similarly, trying to make sense of the complexities of implementing DevOps in an enterprise IT environment with legacy systems that are not going away anytime soon is a problematic situation to understand fully. Organizations are challenged enough, it would seem, just to implement DevOps for their “new” projects. Trying to envelop the legacy IT into their DevOps culture just adds complexity.

However, organizational leaders need to consider if their hard work to implement end-to-end collaborative security within their “new” DevOps leaves gaps in their quantifiable level of security. Can you have an integrated security across both “new” projects and legacy systems?

within their “new” DevOps leaves gaps in their quantifiable level of security. Can you have an integrated security across both “new” projects and legacy systems?

The visionary, transformational leader will press on and seek to envelop the entire IT environment within the DevOps purview. Doing so is well worth the effort. The first step to realizing a comprehensive DevOps perspective is to get an analytical, enterprise-wide “big picture” view of the IT system which combines both new DevOps and legacy systems into an integrated whole.

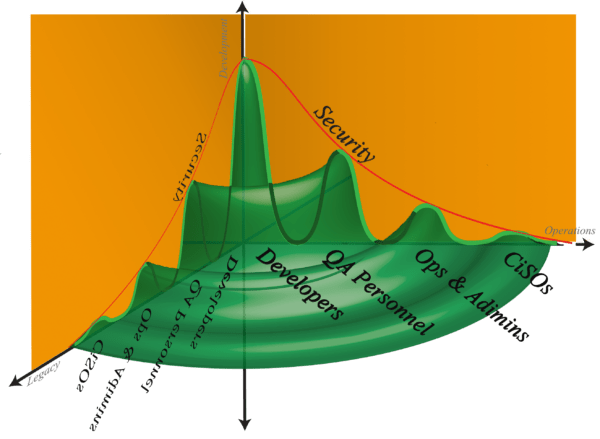

We can illustrate this by, again, considering our conceptual graph. Up to now we have included a third axis for legacy systems and rotated the original graph one-quarter turn. The graph turned complicated and messy. If we, however, continue rotating the original graph all the way around the vertical axis, we get a much more straightforward to digest “big picture” – one that looks like the surface of water just after someone tossed in a small pebble. When we get a full “big picture” view, we still have a complicated situation, but we have the perspective needed to make sense of it.

Understanding the complexities of implementing a DevOps transformation in an enterprise environment replete with legacy systems and applications is similar. Leaders need to start with an enterprise-wide “big picture” view that gives them the proper perspective from which practical solutions may be drawn.

Enterprise DevOps Perspective on Legacy Systems

Trying to apply standard DevOps tools and processes to legacy systems and applications is futile. There is enormous compatibility gap between legacy technology and DevOps tools. Replacing legacy IT with modern DevOps-type counterparts will, in most cases, be both too risky and cost prohibitive. Doing nothing – or worse, attempting bimodal IT management as a long-term solution – will leave the organization further and further behind the competition.

There is, however, an excellent practical solution available through enterprise threat modeling with threat modeling methodologies like VAST. The VAST methodology is specifically designed to integrate with existing DevOps workflows and toolsets. Additionally, the VAST methodology works from an architectural basis, not an engineering, data-flow basis.

As we have shown throughout this series, enterprise threat modeling with this methodology works exceptionally well to provide specific, immediately actionable outputs end-to-end through the DevOps workflow and for CISOs and other senior leaders. Moreover, integrating with the right tooling extends the purview – and outputs – of enterprise threat modeling to the legacy system and applications equally well.

Thinking Three Dimensionally: Incorporating Legacy into DevOps

Automatically building detailed threat models from architecturally-based process flow diagrams works particularly well when the project is still in the design whiteboarding phase. It may be impossible to create an architectural diagram for Legacy IT: the original documentation may be missing, outdated, or made irrelevant through multiple upgrades, patches, and new interfaces. Furthermore, it may not be economically practical to invest limited architectural or engineering resources to diagram all the legacy items visually. However, with the right tooling, it is possible to automatically bring the legacy apps and systems into the DevOps fold.

Finding tooling for legacy DevOps has long been a challenge. However, that is quickly changing as startups and established IT product companies invest more in this space. In particular, there is an increasing number of application and network scanners on the market that can go beyond mere vulnerability scanning to output architectural information of the deployed environment. Simply creating detailed architectural scans, though, will not bring legacy apps and systems under the organization’s DevOps umbrella.

Enterprise threat modeling with the proper tool that can integrate with network and application scanners through a custom API, however, can be used to create architecturally-based process flow diagrams automatically. The threat modeling tool can then automatically create detailed threat models from the visual diagrams. The detailed threat models of the legacy items will be automatically incorporated into the threat modeling tool’s enterprise threat model portfolio, which informs the enterprise dashboard and feeds into the comprehensive attack surface analyzer. Moreover, once the individual legacy item threat models exist, stakeholders can drill down to understand any specific security concern, design considerations, or how to implement new business requirements – just as can be done by the DevOps team for any project cycling through the CI/CD pipeline.

To learn more about how enterprise threat modeling can bring your legacy IT apps and systems into the DevOps fold, schedule a ThreatModeler demo today.

[1] Forsgren, Nicole. “2017 State of DevOps Report.” DevOps Research and Assessment (DORA). Puppet: Portland. 2017.

[2] Vadganadam, Aditya. “Devops in Legacy Systems: A Mission Impossible?” DevOps.com. Mediaops, LLC: Milwaukee. March 17, 2017.

[3] Humble, Jez. “The Flaw at the Heart of Bimodal IT.” Continuous Delivery. Jez Humble: San Francisco. Aprill 3, 2016.

[4] Campbell, Mark A. “Saying Goodby to Bimodal IT.” CIO Insight. QuinStreet Inc: Foster City. January 13, 2016.